Private Post-Training and Inference for Frontier Models

A technical deep dive of Silo, our local-like privacy stack for cloud-based training and inference of trillion-parameter models.

Rudolf Laine, Tanya Verma*

Daniel McCann-Sayles, Jules Drean*

*=Tinfoil

March 16, 2026

Every time you use AI, both the AI company and the cloud provider see your data. Exposing every detail of your conversations, your documents, and your expertise is the price of admission.

To fix that, Workshop Labs has collaborated with Tinfoil to build Silo, the first production-ready multi-GPU private post-training and inference stack for frontier models. With Silo, no one except the customer themselves can see customer data – including Workshop Labs.

When we post-train or serve frontier open-weight models in Silo, your data is processed inside hardware-protected trusted execution environments (TEEs). These are secure environments where your data is only accessible to you; neither we nor the cloud provider can see your data. The TEE cryptographically proves what code is running inside it, and we will get that code audited. Protection extends outside the TEE by encrypting all data at rest with customer-managed keys.

The performance cost for both post-training and inference is less than 10%. A 2-hour training run with Trellis, our post-training stack that’s 50x faster than the best alternative open source implementation, only takes an extra 11 minutes when run inside Silo:

A privacy philosophy for the AI age

The stakes of privacy rise with AI. AI creates the ability to cheaply make enormous amounts of deductions from data. It can also increasingly be trained on a dataset to acquire the skills and expertise within it. Not caring about privacy used to mean getting worse ads, but not caring about it in the age of AI might mean a person loses their job or a company loses their moat to someone else’s AI trained on their data.

In the age of AI, privacy should not be something you need to think about. If you care a lot about it, you shouldn’t have to retreat to small locally-deployed models to maintain it. And even if you don’t think about it at all, you shouldn’t be exposed to the downside risk because the engineers building your tools should’ve taken care of it for you.

AI isn’t private if whoever controls the GPU can see everything

Regardless of any SOC-2 certificates, here’s what happens when you use AI service providers. You have data—for example messages or training data—that you send to your AI service provider. Your data travels over the internet. This is fine, because it’s encrypted end-to-end by TLS so no one can snoop on it. Then, the data is decrypted on the service provider’s machine, passed through some AI model, and encrypted again before being sent back over the network to either the client or encrypted cloud storage.

What that leaves out:

- What goes on when the data is on the machine of the service provider? As far as you know, anything at all. Your data is entirely naked to both the service provider and the cloud provider.

- Who has access to the decryption key for data at-rest? In the standard setup, the AI provider's code can decrypt any customer's data at any time. Even when customer-managed keys are offered, the provider retains decrypt access—the customer only gets the ability to revoke the key entirely. The cloud provider, who operates the underlying key management infrastructure, has a similar level of access.

How do we fix this? Let’s start with the second, easier issue. The distribution of decryption key access is often only thought about for very security-conscious apps like password managers. We’ve made key security stricter than most enterprise apps by having user-specific keys. No one in the world other than you can decrypt your data.

The privacy of data in-use is often left out for a deeper reason. To operate on data, it’s assumed you have to decrypt it. After all: if you want to use an object in a safe, you better open the safe. (Unless, that is, you use homomorphic encryption—but then you have a >1000x slowdown and memory requirement blowup.)

For most of computing history, this was fine. But AI is different. The data is richer, it’s not structured rows in a database but entire conversations, proprietary documents, the way you think and work.

You can always use local deployments, but buying the hardware to run frontier models is extremely expensive and means being responsible for setup, maintenance, and upgrades yourself.

So what most companies actually do is rely on trust. They write a privacy policy, and you take them at their word. But we’ve already seen major AI companies update their terms to retrain user data for longer or train on user data by default, with opt-out buried in settings. And even without deliberate policy changes, things happen: engineers troubleshoot production incidents and encounter real data, competitive pressure to ship sometimes lead to insecure code being shipped, contractors are given overly broad access by accident. Overall, a good litmus test for privacy practices is whether incentives align, and with AI providers they often don’t.

Underneath all of this is a deeper issue: verification. On the internet, TLS gives you a technical guarantee that your ISP can’t read your traffic. There’s no equivalent for "we promise we aren’t looking at your data while we process it." You can’t verify a company policy the way you can verify a cryptographic protocol.

So we need something else. We need a way to process data on someone else's hardware where the operator cannot access it, and where this impossibility is verifiable.

Our approach: multi-GPU trusted execution environments with customer-managed keys for at-rest data

A trusted execution environment (TEE) is a secure environment built into the hardware itself. It has two properties:

-

Isolation The TEE encrypts its memory in hardware: the host OS, the cloud provider, and other tenants cannot read it. The software image running inside is locked down by design: no SSH server, no shell, read-only root filesystem. Tinfoil built this image such that no one can get in from the outside, even if they’re the one who deployed it, and attestation lets you verify this.

-

Remote attestation The TEE can cryptographically prove what code is running inside it to the outside. The fingerprint of the code running in the TEE is signed by the chip manufacturer (AMD, Intel, NVIDIA), not by Workshop Labs or Tinfoil. The signature is unforgeable.

CPU-based TEEs such as Intel TDX, AMD SEV-SNP and AWS Nitro Enclaves have been around for a while and power several trusted security architectures in production today. Apple uses them for Private Cloud Compute. Signal uses them for contact discovery.

In 2023, NVIDIA extended this to GPUs with NVIDIA Confidential Computing. But in practice, GPU TEEs have been limited to single-GPU configurations, and cloud providers like GCP and Azure still only offer this today. With one GPU, even with quantization and parameter-efficient LoRA training, you are limited to models under about 100 billion parameters.

Tinfoil has already extended multi-GPU TEEs to inference, letting them deploy large models with verifiable privacy. For making multi-GPU TEEs operationally viable, Tinfoil worked through full-stack engineering issues across host-side orchestration, cryptographic attestation of the GPU mesh topology, and patches to Ubuntu repositories and the Linux kernel itself. GCP and Azure haven't built this stack, which is why they're still limited to single-GPU TEEs.

Tinfoil provides the TEEs and the infrastructure around them. Workshop built Silo, our integrated post-training stack that consists of our Trellis stack for training, a custom vLLM fork with Kimi K2 Thinking support & modified LoRA for inference, a user-specific key management system, API, and cloud storage, and the application layer for you to interact with your private AI.

Silo’s system architecture

A training job starts as a request from a client to a CPU-only application server that deals with user accounts, keys, managing data sync, and routing requests to the right GPU backends.

An API call to Trellis launches a post-training job on a multi-GPU TEE node. Once the job finishes, the weights are encrypted with a user-specific key and uploaded to cloud storage.

Inference requests verify the user through their key. The inference server runs our custom fork of vLLM, enabling us to serve dozens of users on one cluster at the same time via multi-tenant LoRA—all private to us and to each other.

We use envelope encryption to allow passphrase rotation without re-encrypting all data.

What that gets you

Silo delivers security comparable to local deployments, at a fraction of the cost, and without the dependence on the AI service provider. The audit is pending, but already it is true that Workshop cannot see your data or use your model if training and inference happen on Silo. See the table for details:

In the rest of this post, we walk through the technology behind TEEs and the details of our security stack, showcase how together they block a wide range of threat models, and talk about what’s next.

Technical walkthrough

How TEEs work

When your data is being processed, it exists in six physical locations. For each one, the question is the same: could someone outside the TEE see it? Let's trace the path, considering both software attacks (someone with root on the host) and physical attacks (someone with hands on the hardware).

-

CPU and GPU registers This is where computation actually happens. Data is loaded from memory into registers, operated on, and written back. Registers live inside the physical CPU and GPU dies, inaccessible to any external software or hardware interface.

-

CPU memory (DRAM) Encrypted by the CPU's memory encryption engine (AMD SEV-SNP or Intel TDX), with single-digit-percent performance overhead. The host sees only ciphertext.

-

GPU memory (HBM) Not encrypted, but physically soldered onto the GPU die, not socketed like a RAM stick. No software interface exposes it to the host.

-

CPU ↔ GPU (PCIe) Encrypted end-to-end with AES-GCM. On Nvidia’s Hopper GPUs, this uses a bounce buffer architecture; on the newer Blackwell GPUs, TDISP/IDE provides native inline encryption, eliminating the bounce buffer.

-

GPU ↔ GPU on the same node (NVLink) Hopper does not encrypt NVLink traffic; Blackwell does (AES-GCM). Therefore on Hopper, GPU-to-GPU communication is protected against software attacks but not physical ones.

-

Node ↔ node (InfiniBand) Neither Hopper nor Blackwell supports multi-node TEEs. This doesn't currently limit us, since LoRA and quantization let us post-train frontier open models on a single 8-GPU node. Per-node memory capacity is also growing fast, and Nvidia has announced that the Vera Rubin generation will support multi-node TEEs.

How Workshop and Tinfoil built a stack that they cannot see

The most important privacy property we want to enforce is that we at Workshop Labs cannot see your data or use your model, even if we tried. But how can we deploy a server we can’t access?

The software image on Tinfoil TEEs is locked down by construction. It does not have any SSH server, shell, or debugging tools. There is no way to log in from the outside, even if you’re Tinfoil or Workshop—the most we can do is turn it on or off. The only way data can enter or leave the TEE is through specific whitelisted API endpoints.

But of course, nothing on the TEE side helps if the code running on the TEE is malicious or has vulnerabilities. The Workshop Labs server images that run on the Tinfoil TEE images bind the user's encryption key at the HTTP boundary. Since key decoding, validation, and storage happens before any business logic runs, this helps separate business logic and security.

On every connection, the TEE also delivers an unforgeable attestation report to the connecting client. This gives a hardware-backed proof that, firstly, the client is connecting to an actual TEE. Secondly, it also includes the full hash of the image running on the TEE, attesting that only the right code, and nothing else, is running. Of course, for this to be airtight, the connecting client needs to be able to know whether the hash in the attestation report corresponds to safe or unsafe code. We discuss how we plan to deal with this below in the verification and attestation section.

Software attacks from the cloud provider running arbitrary software on the host machine

We’ve seen that the service provider cannot see inside the TEEs. But we rent GPUs from a cloud provider. They could, in theory, run malicious software on the host machine. What if the cloud provider, or Tinfoil, tries to peek at what's happening inside?

TEEs were designed to prevent this threat. The TEE encrypts all CPU memory, so even with full control over the machine the cloud provider sees only ciphertext.

Side channels

There is one class of software attack that is difficult to fully prevent, called side-channel attacks. A side channel doesn't read your data directly. Instead, it infers secrets from indirect effects of computation, like how long an operation takes, which cache lines get evicted, or how the CPU's branch predictor behaves.

Side channels have always existed, but were a limited threat because many stars had to align for them to be useful. A classic side channel might leak information about a secret value sitting in a CPU register, but only if that value was followed by specific instructions that produced observable timing differences, and only if the attacker could measure those differences precisely enough. This doesn't happen often in practice, and cryptography libraries have long used constant-time implementations as a precaution against timing attacks.

However, in their perennial race to improve CPU performance, CPU manufacturers introduced speculative execution, where the CPU guesses which instructions will run next and starts executing them early. Security researchers, most famously with Spectre, discovered that even though the CPU rolls back incorrectly speculated instructions, the side effects on cache state persist and give an attacker usable information. This made side channels considerably more powerful, and a building block for a broader class of transient execution attacks.

These attacks are real, but very sensitive to noise. They work best in controlled environments where the attacker can trigger specific code paths and repeat the attack many times. Against production workloads processing variable data, they are far harder to pull off. Chipmakers mitigate through microcode updates and hardware changes, but speculative execution is an architectural property of modern CPUs, and side channels as a class remain an active area of research without a clean, complete fix at the silicon level.

Firmware bugs

Hardware isolation is enforced by TEE firmware, which acts as a gatekeeper deciding which software can access which memory. The security of encryption rests on clean mathematical assumptions. Unfortunately, we do not live in a perfect world. The security of TEEs relies on firmware code, which, like all code, can have vulnerabilities that sufficiently motivated adversaries and security research will find. These get patched by chipmakers once discovered, but, like all code security questions, makes this a game of cat and mouse rather than a mathematical proof.

Physical attacks

If you are worried about adversaries that might be able to gain physical access into the data center and deploy destructive attacks against the CPUs and GPUs, then the TEE stack will make their life harder but does not guarantee defense against the most sophisticated attackers. We expand more on this in the appendix.

Verification and attestation

Everything above describes how we isolate your data. Neither Workshop Labs, Tinfoil, or the GPU provider can see your data. This keeps your data confidential*,* but it does not yet prove our trustworthiness to you automatically. But TEEs don't just protect what's running inside, they also prove what’s running inside them.

The design principle we follow is that any attempt to subvert the system's privacy guarantees should either cause the system to fail in a way that no data is shared (fail closed), or leave a publicly discoverable record (verifiable transparency). TEE attestation provides the first property: if the code doesn't match the expected measurement, the client refuses to connect. Transparency logs provide the second: every build ever deployed is permanently recorded, and any deviation from the audited or open-sourced code can be detected after the fact.

The TEE attests the fingerprint of the running code

When the TEE boots up, the hardware measures a cryptographic hash of everything inside it, creating a fingerprint: the firmware, system software such as the operating system and drivers, the application code, the security configuration. This fingerprint is signed by the chip manufacturer (AMD/Intel + NVIDIA), not by us. We cannot forge it. If we changed a single line of code, the hash would be completely different.

This process, called remote attestation, runs on every connection and gives you a hardware-backed guarantee that certain code is running.

Open-sourcing or auditing allows the code to be read and declared safe

But a fingerprint only proves "the TEE is running code X." That isn't useful unless you can check whether code X is safe.

One way to do this is to make the entire code running in the enclave open source. This way you can read every line of what runs inside the TEE and anyone can audit it. If something bad is in there it’s visible forever. This is what Tinfoil does with their stack.

However, practically speaking, most software is not going to be open source. But does that limit the value a TEE can provide? Not necessarily. The strategy is to make the hash public and permanently committed to, without releasing the code the hash is produced from. A trusted third-party auditor can then inspect the code and certify that it meets the required security standards. This is the approach taken by several industry standard TEE deployments, such as Apple Private Cloud and WhatsApp Private Processing. At Workshop Labs, we plan to arrange a formal audit. In the meantime, a formal audit could also be replaced by a prospective customer signing an NDA and analyzing our codebase to their satisfaction.

Of course, auditors are not omniscient, and code can be sneaky. A subtle exfiltration path buried deep in a dependency, triggered only under rare conditions, could in theory survive an audit. To keep the scope of the audit feasible, the millions of lines of code in the various external libraries that Silo uses are not part of the audit, and some will have vulnerabilities. TEEs greatly limit the blast radius by restricting I/O channels, but they don't eliminate all risk. Of course, this limitation is shared even with local deployments, which also rely on external dependencies.

The source-to-binary link

There's one more gap still. The TEE attests a binary compiled from the source code, not the source code directly. Even if the source code has been marked trusted (by being open-sourced and inspected by the community, by being audited, or being reviewed by a specific customer) and the TEE gives you a hash of the binary it is running, how do you verify that the hash matches the source code?

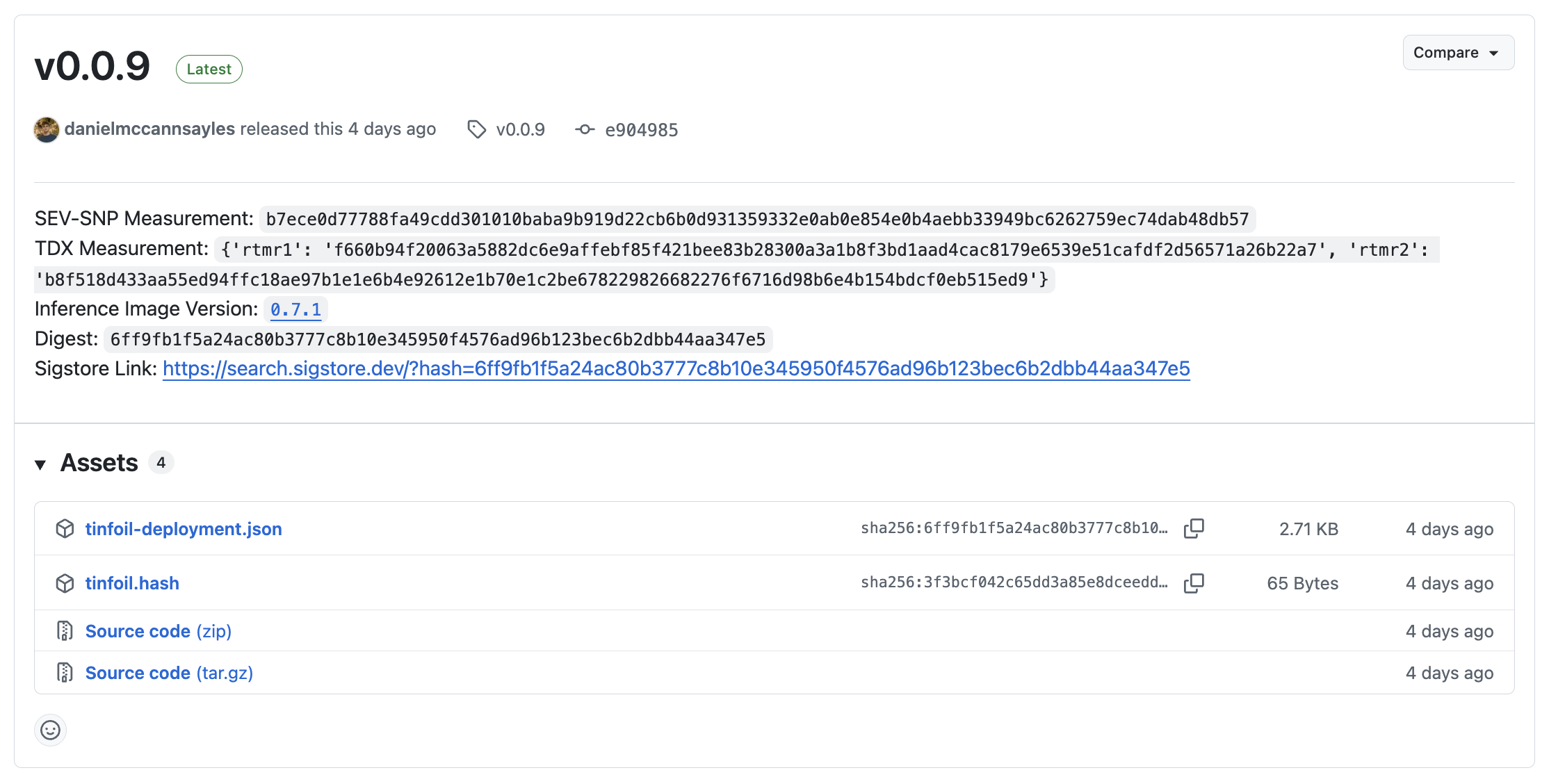

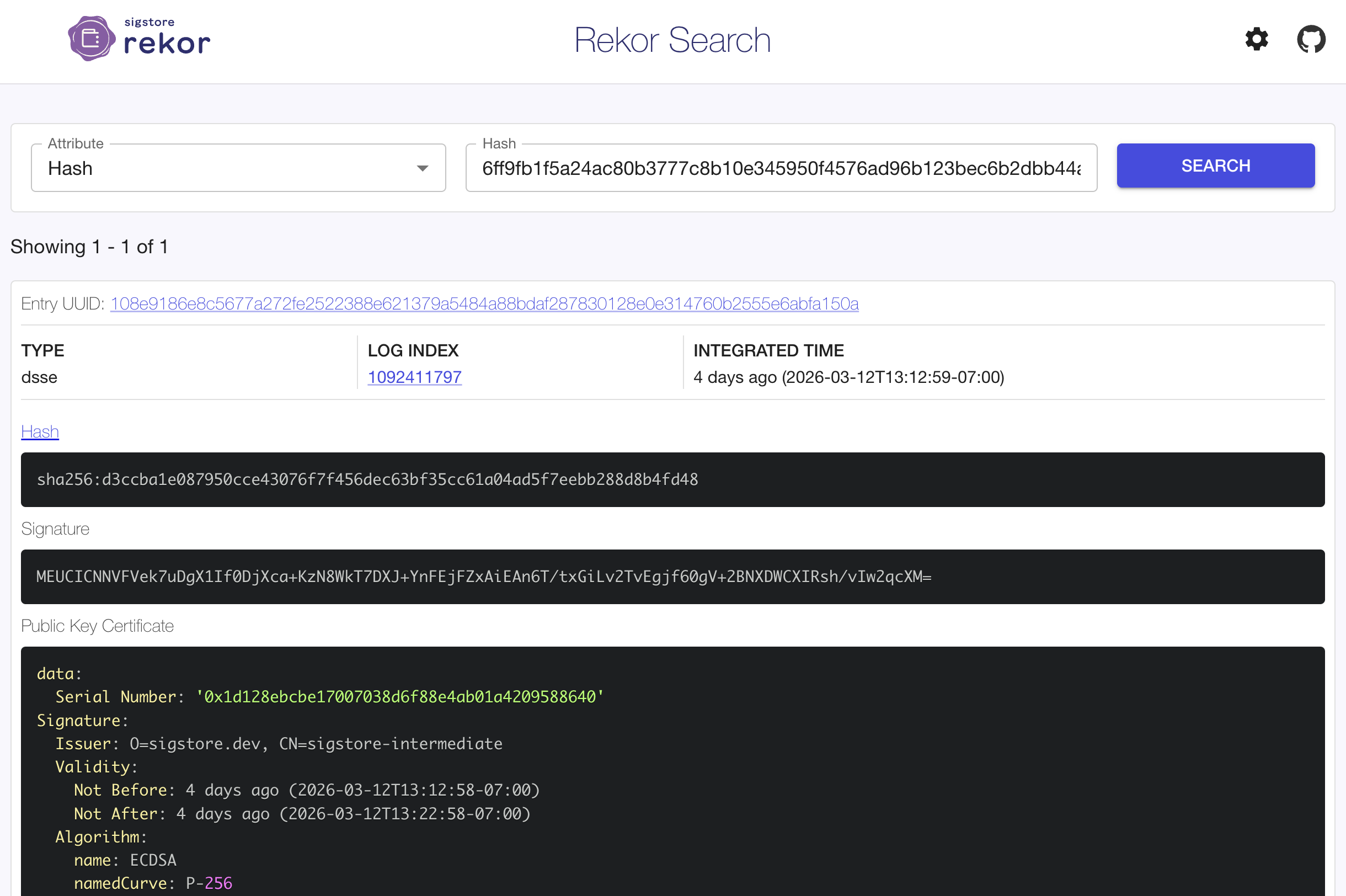

Tinfoil does this by having an automated GitHub Actions worker compile the code of any tagged release and publish its fingerprint to Sigstore, which is a transparency log run by the Linux Foundation, Google, Redhat, and Github amongst others. Sigstore is a public, append-only ledger, where entries cannot be edited or deleted. It gives a permanent, historical log of all deployed versions of our service.

So when you connect, the client will be able to do three things:

- Fetch the fingerprint from Sigstore corresponding to the latest release.

- Fetch the attestation report from the TEE it is connecting to.

- Check that they match. If they do, the server is running exactly the code that we claimed it would be running.

This whole process happens automatically, so you always know that you are connecting to a trustworthy service.

However, note that Tinfoil’s open-source model relies on GitHub's CI infrastructure to faithfully execute the build pipeline. This trust assumption could be removed by making builds bit-for-bit reproducible, so that anyone could rebuild from source and verify they get the same fingerprint, but this presents a challenge for operating system images. The root OS disk is a complete Ubuntu system image with packages from many different maintainers, with non-determinism even from sources like some compilers embedding timestamps into their outputs. Projects like Debian have spent several years cataloguing every source of non-determinism, and doing this for all dependencies in an Ubuntu image would require similar efforts and the participation of all package maintainers. This means that in theory, if both Tinfoil and GitHub colluded, Tinfoil could produce a fake binary with fake measurements.

At Workshop Labs, the source-to-binary connection can largely be verified by the auditor based on build pipeline, CI configuration, and log, but without reproducible builds this bottoms out in also requiring trust in GitHub’s build signing and CI infrastructure.

For context on where the industry sits: Apple publishes every production binary for inspection and a subset of security-critical source code, but not the full source. WhatsApp pins hashes without publishing binaries at all. Tinfoil publishes both the full source and the binary, through an auditable build pipeline. Workshop Labs publishes hashes publicly on the transparency log and will provide full source code to auditors and customers under NDA, which goes further than Apple on source access while also keeping the codebase private. The remaining gap is the GitHub trust assumption, which Tinfoil is actively working on.

How updates work

How do updates work? Any updates will change the hash, and code changes fast, so it’s not realistic to have an auditor review every single release. Workshop plans to handle this two ways:

-

Once the initial audit is completed, the auditor reviews the accumulated difference every few months to ensure no security issues have been introduced.

-

Every hash is permanently recorded on Sigstore with a timestamp. We can’t alter or remove any entries. So a customer or auditor could always ask us to provide the source for any specific version of the codebase, and we will be unable to fake it. The regular audits mean we couldn’t be indefinitely malicious, but even in the event where we were briefly malicious, this would call our bluff.

We also expect that, with AI helping reduce the time and cost of an audit, we can ramp up audit frequency until eventually every update is reviewed before going live.

Tinfoil has already pioneered and proven the version of this model that uses open-sourcing as the trust basis.

Session binding: proving you're talking to the box you attested

Attestation tells you what code a TEE is running. But how do you know the server you're actually communicating with is that TEE running that code, and not something else impersonating it?

This is the binding problem, and Tinfoil solves it by embedding cryptographic identity into the attestation report itself. When the TEE boots, it generates a fresh TLS private key and places the fingerprint of the corresponding public key directly into the attestation report's reportData field, which is the field that gets signed by the hardware. This creates a chain that can't be faked: the chip manufacturer's signature proves that this specific TLS key belongs to this specific TEE running this specific code.

When a client connects, it verifies the attestation report, extracts the TLS fingerprint, and pins the TLS connection to it. The client checks that the server's TLS certificate public key matches the fingerprint from the attestation. If it doesn't match, the connection is refused. This is the primary binding mechanism for all TEE connections.

For inference specifically, the attestation report also includes an HPKE (Hybrid Public Key Encryption) public key. This allows clients to encrypt request and response bodies end-to-end to a key that only the attested TEE holds, which is useful in environments where TLS certificate pinning isn't available (such as browsers).

The result is that verifying an attestation report and talking to the attested server are not two separate acts of faith. They are cryptographically bound and you can’t impersonate an attested TEE without possessing the private keys that were generated inside it.

Roots of trust and limitations

We've traced the full chain together. Data is encrypted in transit by TLS, encrypted at rest with keys only you hold, and processed inside hardware-isolated TEEs whose code is attested and verifiable. We've walked through software attacks, firmware bugs, side channels, physical interposers, and chip-level intrusions. So what's left?

- Hardware from AMD/Intel and Nvidia. Their chips generate the attestation signatures, manage the encryption keys, and enforce the memory isolation boundaries. If their silicon is compromised, everything built on top of it falls.

- The internet's public key infrastructure: the certificate authorities and browser vendors that authenticate TLS connections. This is the same trust assumption you make every time you visit any website, and it applies equally to local deployments that connect to the internet.

- The audit that declares the source code inside the TEE safe.

What you do not need to trust, in theory, is anyone in the service provider stack: not Workshop Labs, not Tinfoil, not the GPU cloud provider. That's the whole point of the architecture. In practice, this claim comes with caveats: the audit hasn't happened yet, the source-to-binary link depends on GitHub, auditors can miss things in the dependency tree, DRAM encryption is deterministic, and side channels remain an open problem.

Looking further ahead, the asymmetric cryptography underlying attestation and key exchange (ECDSA, X25519, ECDHE) is vulnerable to quantum computers. The symmetric encryption protecting memory (AES) is not. Post-quantum standards for the relevant protocols are in progress, and we plan to adopt them as they become available in the hardware we depend on.

It would be dishonest to pretend there’s no gap between this system and perfect security. But at both Workshop Labs and Tinfoil, we still think the gap between this system and standard AI service provider deployment practices is enormous.

What's next

Silo prevents Workshop Labs, Tinfoil, or the cloud provider from accessing any user data during post-training, inference, or when models or data are stored in the cloud. This is the trust backbone behind making it easy for everyone to customize and benefit from AI without worrying about their data being harvested, trained on, or surveilled.

If you or your company is interested in private post-training, sign up to our waitlist here.

We’re also excited to collaborate with security researchers to make Silo more robust over time. To that end, if you think we’re missing something, we’d love to hear from you! Tell us what we missed, or ask questions, at [email protected].

We thank Sacha Servan-Schreiber, Addie Foote, Kyle Morris, Esben Kran, Finn Metz, Gregory Kollmer, Agustín Covarrubias, and Devansh Pandey for reviewing drafts of this post.

Appendix: Physical attacks from inside the data center

Physical attacks have traditionally been considered out-of-scope by TEE manufacturers. For instance, NVIDIA explicitly lists fault injection and other invasive physical attacks as non-covered by their technology. Nevertheless, these remain realistic threats that need to be taken into account.

Researchers have recently demonstrated (TEE.fail, BadRAM, Battering RAM) that physical attacks on TEEs were practical. However, they still require a level of invasiveness that is hard to assume would stay unnoticed in a datacenter. On the other hand it is now required to assume a sophisticated attacker can extract TEE keys in their own lab. This necessitates greater focus on supply chain security and hardware provenance transparency than before.

So to defend against invasive physical attacks, the best mitigation is strong data center security, but most importantly keeping (as Tinfoil does) a close tab on what chips are authorized to take part in their TEE systems. In the rest of this appendix, we will give an overview of existing physical attacks on TEEs to show they are practical for an advanced attacker but inherently invasive.

There are two main types of physical attack on TEEs.

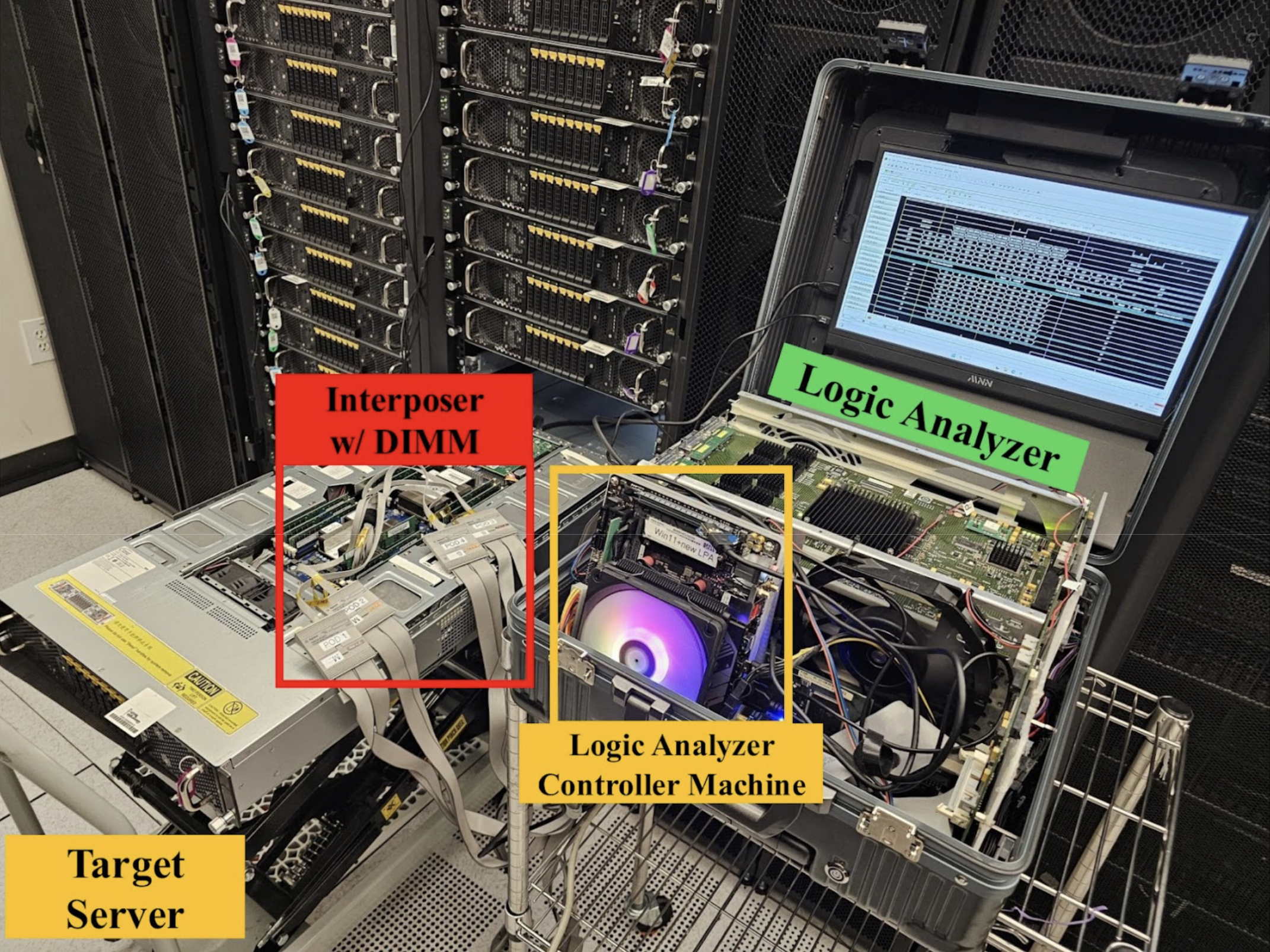

One is physically tapping wires between components. In practical terms, you can do this with a memory interposer, a small chip that can be slotted between, for example, the RAM stick and its socket on the motherboard, and which records everything that passes through. It’s similar to a wiretap on a phone line. Let’s consider five possible locations of the interposer:

- CPU memory (DRAM): An interposer could only capture ciphertext. However, there is a vulnerability: this channel is encrypted with deterministic encryption for performance reasons, meaning the same data at the same address always produces the same ciphertext, and so an attacker could observe and replay these patterns to capture some information. Deterministic encryption is mostly not used anymore in modern day cryptography (instead, encryption takes as input not just plaintext but also a nonce or initialization vector), but here must be due to performance considerations (the CPU to DRAM bus can have a bandwidth of hundreds of gigabytes per second).

- GPU memory (HBM): Not encrypted, but physically bonded onto the GPU package and not socketed like a RAM stick, so you can’t slot an interposer in. Attacking it would involve extremely invasive chip-level probing while at the same time requiring that the program stays running because TEEs don’t have any persistent state (everything is in memory).

- CPU <-> GPU (PCIe): An interposer here would capture ciphertext, but here the encryption uses AES-GCM which is a modern day non-deterministic cryptographic scheme that is not vulnerable to the kinds of replay attacks that would work against CPU memory. This is possible with low performance cost since PCIe bandwidths are around ~64 GB/s, lower than the bandwidth between DRAM and CPU.

- GPU <-> GPU (NVLink): Same, once running on Blackwell GPUs: this uses AES GCM encryption and is not vulnerable.

- Node <-> Node (Infiniband): Once this is available, this also uses AES GCM encryption and isn’t vulnerable.

Physical taps on the CPU or GPU die itself are not feasible with any practical method. The closest semi-practical approach is laser voltage probing, which breaks down below ~20nm due to optical diffraction limits — the wavelength of light is simply too large to isolate individual features — ruling it out entirely for state-of-the-art 3–8nm chips. The next step up, e-beam probing, requires millions of dollars of equipment, weeks of destructive preparation, and realistically can only extract static data from a powered-down chip. This points to the second problem: such an attack would likely cause the chip to power off, either through damage or as a consequence of transferring it from a server rack to a laboratory. Since all user data in a TEE lives only in volatile memory, it would be gone and inaccessible to the attacker. Therefore such attacks would most likely be going after the persistent per-chip identity key rather than data in the TEE itself. Access to this identity key would let an attacker sign attestation reports on behalf of that single chip that appear legitimate (since the identity key is different per chip), so if the attacker did this for the CPU and every GPU involved and also controlled routing from client to the TEE, the client would then be duped into sending the attacker information. However, this would be an incredibly sophisticated operation for an external party to pull off, and would be slightly easier but still difficult even if the GPU provider, Tinfoil, and Workshop Labs were all conspiring together against the user.

The second type of attack is called a fault injection. Instead of tapping a wire to listen, the attacker deliberately disrupts the hardware by glitching the power or firing a laser at a precise moment, in order to make the CPU skip an instruction, flip a bit or produce a wrong result. These attacks directly target fundamental assumptions about hardware correctness itself and are not mitigated by bus encryption, because the goal is to make the hardware misbehave such that it skips a security check or corrupts a cryptographic operation.

NVIDIA explicitly lists fault injection and invasive physical attacks as out of scope. So to defend against invasive physical attacks, the best mitigation is strong data center security, using data centers with high security reputations, and keeping (as Tinfoil does) a close tab of what chips are whitelisted to take part in their TEE systems. Because installing an interposer means opening a server, pulling a RAM stick, inserting the device and reassembling it all in a running data center while keeping the program running, it would cause enough traces that can be collected. This is not a remote attack that can be run from afar, nor a physical attack that can be run cheaply or without traces.